Clinical Decision Support for Preventive Care: What It Is (and What It Isn’t)

Clinical decision support for preventive care, defined

Clinical decision support for preventive care is software that surfaces the right action, for the right patient, at the right moment in a clinical workflow. It synthesizes guidelines, patient data, and risk models into a recommendation a clinician can accept, modify, or ignore. It is not a diagnosis, not a replacement for judgment, and not a substitute for the longitudinal data that should sit underneath it.

That distinction matters more in 2026 than it did a year ago. The FDA released updated final guidance on Clinical Decision Support Software in January 2026, drawing a sharper line between CDS that qualifies as a regulated medical device and CDS that does not. At the same time, primary care clinicians are receiving an average of more than 56 alerts a day, and roughly half are overridden.

This article is for physicians being asked to evaluate, deploy, or trust CDS in a preventive setting. I’ll cover what it is, what it isn’t, where the evidence is strong, where it is shaky, and how to think about implementation without burning out a team.

What clinical decision support does in a preventive care workflow

CDS is any tool that takes patient-specific information, matches it against a clinical knowledge base, and produces a relevant recommendation at the point of care. In preventive medicine, that usually looks like:

- Reminders for overdue screenings (colonoscopy, mammography, DEXA, AAA ultrasound)

- Risk-stratified prompts (statin initiation based on ASCVD risk, lung cancer screening based on pack-year history)

- Vaccine schedules adjusted for age, comorbidities, and prior doses

- Lab interpretation in context (a hemoglobin A1c of 5.9 in a patient with rising fasting insulin reads differently than the same value in a lean, normoglycemic patient)

- Lifestyle and pharmacologic prompts based on trajectory rather than a single value

The useful CDS tools share three properties. They are patient-specific, not population-generic. They fire inside the workflow the clinician is already in. And they show their reasoning, so the clinician can override intelligently rather than reflexively.

A 2021 study in BMC Medical Informatics and Decision Making found that a point-of-care preventive CDS saved an average of 195.7 seconds of chart review per encounter without degrading decision accuracy. Three minutes per visit, across a full panel, is the difference between burnout and a workable schedule.

What clinical decision support is not

A few clarifications worth making explicit, because I see them confused often.

CDS is not autonomous AI. It does not decide. It recommends. The FDA’s 2026 guidance is explicit that software qualifies as non-device CDS only when the clinician can independently review the basis of the recommendation. Black-box outputs that a physician cannot interrogate fall on the device side of the line and require regulatory clearance.

CDS is not a diagnostic tool. A prompt to consider hereditary cancer screening based on family history is not a diagnosis of Lynch syndrome. The system flags. The clinician decides.

CDS is not the same as a population health dashboard. Dashboards show you who is overdue. CDS tells you what to do about it during the visit. Both are useful, and they are not interchangeable.

CDS is not a substitute for a longitudinal record. A reminder to check lipids is only as good as the data it is reading. If your patient’s last panel lives in a PDF in another health system, the CDS will either miss it or duplicate it.

CDS is not free of bias. Risk calculators built on cohorts that underrepresent women, non-white patients, or patients under 40 will under-flag those groups. The ASCVD pooled cohort equations are a well-documented example. A good CDS implementation makes its assumptions visible.

Clinical decision support vs autonomous clinical AI

These terms get used interchangeably and they shouldn’t.

| Dimension | Clinical Decision Support | Autonomous Clinical AI |

|---|---|---|

| Output | Recommendation | Decision or prediction |

| Clinician role | Reviews and acts on recommendation | May act without real-time human review |

| Transparency | Reasoning is inspectable | Often opaque (model weights, embeddings) |

| FDA status (2026) | Often non-device if criteria met | Typically a regulated medical device |

| Failure mode | Alert fatigue, missed nuance | Silent error, automation bias |

| Best use in preventive care | Guideline adherence, risk stratification, screening | Image triage, signal detection in passive data |

Both have a place. The mistake is conflating them, because the governance, the workflow, and the liability are different.

What the evidence says about CDS in preventive care

A Community Guide systematic review of CDSSs for cardiovascular disease prevention found median improvements of 3.8 percentage points for preventive services, 4.0 for clinical tests, and 2.0 for prescribing. Odds ratios across pooled analyses ran roughly 1.4 to 1.7 for the same outcomes.

Those are real effects. They are also modest. CDS does not turn a struggling practice into a high-performing one. It moves a competent practice from 70% guideline adherence to 75% or 78%. For preventive care, where small absolute gains across thousands of patients translate into prevented strokes and detected cancers, that math is worth doing. It is not a miracle.

Where the evidence is weakest:

- Long-term outcomes. Most CDS studies measure process (was the screening ordered?) rather than outcome (did mortality change?). The chain from prompt to prevented event is long.

- Sustainability. Effects often fade after 12 to 24 months as alerts get tuned out.

- Generalizability. A CDS that works in a tightly integrated EHR at an academic center may not transfer to a 3-physician practice on a different stack.

I find this evidence encouraging but not triumphant. CDS pays off when implemented carefully and decays when it isn’t.

Why alert fatigue breaks most CDS deployments

This is the failure mode that breaks most deployments.

Primary care clinicians receive an average of 56+ alerts per day. In some specialties that climbs to 100 or 200. One analysis of medication-related CDS alerts found only 7.3% were clinically appropriate. Override rates run 49% to 96%. A majority of ICU clinicians in one survey reported alert fatigue while still believing alert response was part of their job.

In preventive care, alert fatigue is particularly destructive because the alerts are non-urgent by definition. A statin reminder competes with a sepsis alert, a drug interaction warning, a missing-documentation prompt, and an inbox full of refill requests. The non-urgent prompt loses every time.

What separates the practices that benefit from CDS from the ones that just suffer from it:

- Aggressive alert pruning. One health system cut alert volume by 75% in a year by retiring alerts no one acted on. Removing low-value alerts is the highest-ROI CDS work most organizations never do.

- Passive over interruptive. A prompt that lives in a sidebar the clinician can scan beats a modal that demands a click. A 2024 PMC study replaced a burdensome interruptive alert with passive CDS and improved both adherence and clinician satisfaction.

- Patient-specific reasoning, shown. “Recommend statin: ASCVD 10-yr risk 14.2%, LDL 162, no current lipid-lowering therapy” is actionable. “Statin recommended” is noise.

- Closed feedback loop. If clinicians can flag bad alerts and see them retired, they trust the system. If overrides disappear into a void, they stop reading.

What effective preventive care CDS looks like at the point of care

A 52-year-old woman comes in for an annual visit. Her last colonoscopy was at 47. The CDS flags her as due in two years and surfaces a one-line note: “USPSTF recommends rescreen at 10-year interval given normal index exam. Family history negative.” That’s it. No interruption, no required click. The clinician notes it in the visit summary and moves on.

Compare that to a hard-stop modal in the middle of charting that says “PATIENT IS DUE FOR COLONOSCOPY” with no context and no risk stratification. The first design supports the clinician’s decision. The second mainly documents that an alert was shown.

The difference is not the underlying recommendation. It is the design choices around when, how, and with what context the recommendation appears.

Why CDS fails without longitudinal patient data

CDS sits on top of data. If the data is fragmented across portals, PDFs, faxed reports, and a wearable app the patient subscribed to last spring, the CDS is making recommendations from a partial picture.

This is the gap I see most often in preventive medicine: practices invest in a CDS layer before they have the longitudinal record to feed it. The result is a system that confidently recommends a screening the patient already had, or misses a trajectory because it can only see the most recent value.

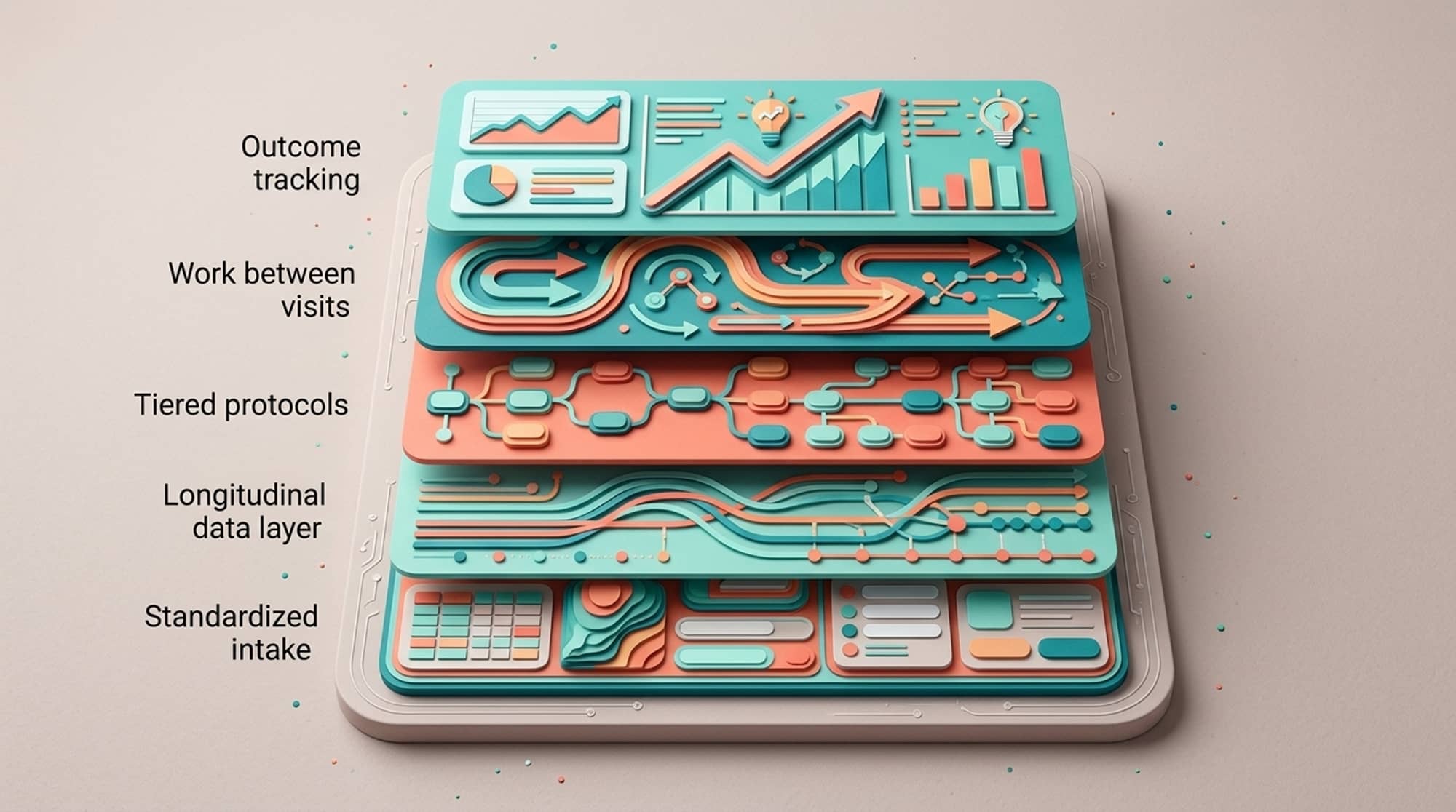

At Longevitix we built around this constraint first. CDS only works if the substrate underneath, unified labs, wearables, imaging, family history, and prior records, is clean and current. The decision support is the visible layer. The data plumbing is what makes it trustworthy. (For more on why trajectory beats single snapshots, see Beyond Biomarkers: From Diagnostic to Predictive Medicine.)

A clinician’s checklist for evaluating preventive care CDS

If you are reviewing a CDS tool for your practice or system, ask:

- What evidence base does each recommendation cite, and can a clinician click through to it?

- How are alerts prioritized, and what is the override rate?

- Can the system be tuned by your clinicians?

- What happens when the underlying guideline changes? How fast does the CDS update?

- How does it handle patients who fall outside the cohorts the underlying risk models were built on?

- What integrations does it require, and how does it handle missing data?

- Does it qualify as non-device CDS under the FDA’s 2026 guidance, or is it a regulated medical device? This affects your governance, not just the vendor’s.

Where clinical decision support for preventive care is headed in 2026

The FDA’s 2026 guidance signals that the regulatory line between CDS and AI-driven medical devices will get sharper, not blurrier. Expect more scrutiny on transparency and on whether clinicians can meaningfully review the basis for a recommendation. Expect the market to bifurcate: lightweight, transparent CDS on one side, regulated AI-as-a-device on the other.

For preventive medicine specifically, the interesting frontier is moving from “patient is overdue” prompts to “patient’s trajectory is changing” prompts. Out-of-pattern matters more than out-of-range. A fasting glucose drifting from 88 to 96 to 103 over three years is a CDS use case that current systems handle poorly. The next generation will need to read trajectories across labs, wearables, and imaging together.

Key takeaways on clinical decision support for preventive care

CDS for preventive care is a tool. Used well, it lifts guideline adherence by a few percentage points and gives clinicians back minutes per visit. Used badly, it adds alerts to an already saturated workflow and trains physicians to ignore the system entirely.

The deciding factor is rarely the algorithm. It is the data underneath, the design of the alerts, and whether clinicians have any real authority over what fires and when.

FAQs

What is clinical decision support for preventive care?

Clinical decision support for preventive care is software that delivers patient-specific, evidence-based recommendations to clinicians at the point of care for screenings, vaccinations, risk-based prevention, and lifestyle interventions. It synthesizes guidelines and patient data into actionable prompts the clinician can accept or override. CDS does not diagnose or treat; it informs the clinician’s decision.

How is clinical decision support different from clinical AI?

CDS produces transparent recommendations a clinician reviews before acting, while autonomous clinical AI may generate predictions or decisions without real-time human review. Under the FDA’s January 2026 final guidance on Clinical Decision Support Software, software qualifies as non-device CDS only when the clinician can independently review the reasoning behind the recommendation. Opaque AI tools generally fall on the regulated medical device side of the line.

Does clinical decision support actually improve preventive care outcomes?

Systematic reviews show CDS produces median improvements of about 3.8 percentage points for preventive services completed, 4.0 for recommended clinical tests, and 2.0 for prescribed treatments, with pooled odds ratios around 1.4 to 1.7. The effects are real but modest, and they tend to fade over 12 to 24 months without active maintenance. Most evidence measures process outcomes rather than long-term mortality.

What is alert fatigue and why does it matter for preventive CDS?

Alert fatigue is the clinician desensitization that occurs when too many low-value alerts fire during a workday. Primary care clinicians receive an average of more than 56 alerts per day, override rates run 49% to 96%, and one analysis found only 7.3% of alerts were clinically appropriate. Preventive CDS is especially vulnerable because non-urgent prompts get drowned out by urgent ones.

What does the FDA’s 2026 guidance say about clinical decision support software?

The FDA’s January 2026 final guidance on Clinical Decision Support Software clarifies the criteria that distinguish non-device CDS from regulated medical device software. Tools that allow clinicians to independently review the basis for a recommendation generally qualify as non-device CDS, while opaque or autonomous tools are regulated as medical devices. The guidance affects how vendors design products and how health systems govern their use.

Can clinical decision support replace physician judgment in preventive care?

No. CDS is designed to augment clinician decision-making, not replace it. Risk calculators and guideline-based prompts often underperform in patients who fall outside the cohorts the underlying models were built on, including women, non-white patients, and younger adults. The clinician remains responsible for interpreting the recommendation in context.

What makes a clinical decision support implementation succeed or fail?

Successful implementations aggressively prune low-value alerts, prefer passive prompts over interruptive modals, surface the reasoning behind each recommendation, and give clinicians authority to retire alerts that aren’t useful. One health system reduced alert volume by 75% in a year by retiring unused alerts. Failure modes include over-alerting, opaque reasoning, and feeding the CDS from fragmented data.

Why does longitudinal patient data matter for preventive CDS?

CDS is only as accurate as the data feeding it. If labs, imaging, prior records, family history, and wearable data live in disconnected systems, the CDS will miss context or duplicate recommendations. Preventive medicine increasingly depends on detecting trajectory changes (out-of-pattern values) rather than single out-of-range results, which requires unified longitudinal data as the substrate beneath any CDS layer.