Geoffrey Fowler gave ChatGPT ten years of Apple Watch data. 29 million steps. 6 million heartbeats. A decade of health metrics meticulously tracked.

ChatGPT gave him an ‘F’ for cardiac health. He asked again. Lo and behold, the grade swung to a B. Same data, same patient, dramatically different assessment.

His actual cardiologist said he was at such low risk that insurance wouldn’t even cover additional testing. Dr. Eric Topol, one of the country’s leading cardiologists, called ChatGPT’s analysis “baseless” and warned people to ignore its medical advice.

This reveals a critical fork in the road for wearable health data: will it become a gateway to preventive medicine or a source of anxiety and potentially dangerous misinformation?

The Pattern Recognition Trap: Why Consumer AI Fails at Clinical Interpretation

The Washington Post investigation exposed fundamental architectural flaws in applying general AI models to medical interpretation:

Wild inconsistency. When you ask the same clinical question multiple times and receive grades ranging from F to B, you don’t have a diagnostic tool, you have a random number generator with a medical vocabulary. ChatGPT couldn’t remember basic facts like the user’s age and gender despite having full access to their health records.

Context blindness. Both ChatGPT and Claude treated wearable metrics as clinically validated measurements, ignoring that consumer-grade sensors operate under different accuracy standards than clinical devices, that reference ranges vary by demographics, and that individual baselines matter more than population averages.

Confident wrongness. The AI delivered grades and assessments with authority that physicians found completely unjustified. This false confidence creates the most dangerous scenario in healthcare: patients making decisions based on information they believe to be reliable when it demonstrably isn’t.

Hallucinations at scale. Google’s health AI told pancreatic cancer patients to avoid high-fat foods which is the exact opposite of correct guidance, potentially jeopardizing their ability to tolerate chemotherapy. A broader study found AI health overviews have error rates as high as 44%.

Data Without Clinical Context Creates Harm in Both Directions

People with wearables are health-conscious. They’re tracking metrics because they care about optimization. When an AI tells them they’re failing at cardiac health, they listen.

Some will panic unnecessarily, scheduling expensive tests they don’t need or developing health anxiety that degrades their quality of life. Others who actually need intervention might get reassuring grades that delay treatment. Both scenarios undermine the entire purpose of continuous health monitoring.

The issue isn’t the data itself. Apple Watch heart rate measurements can reveal meaningful patterns over time. The problem is interpretation without medical expertise. A resting heart rate of 65 means something different for a trained marathoner versus a sedentary office worker. Sleep architecture patterns need context from age, lifestyle, medications, and other health factors.

Consumer wearables generate metrics, but they don’t generate understanding. Real understanding requires clinical expertise integrated into the interpretation process from the beginning.

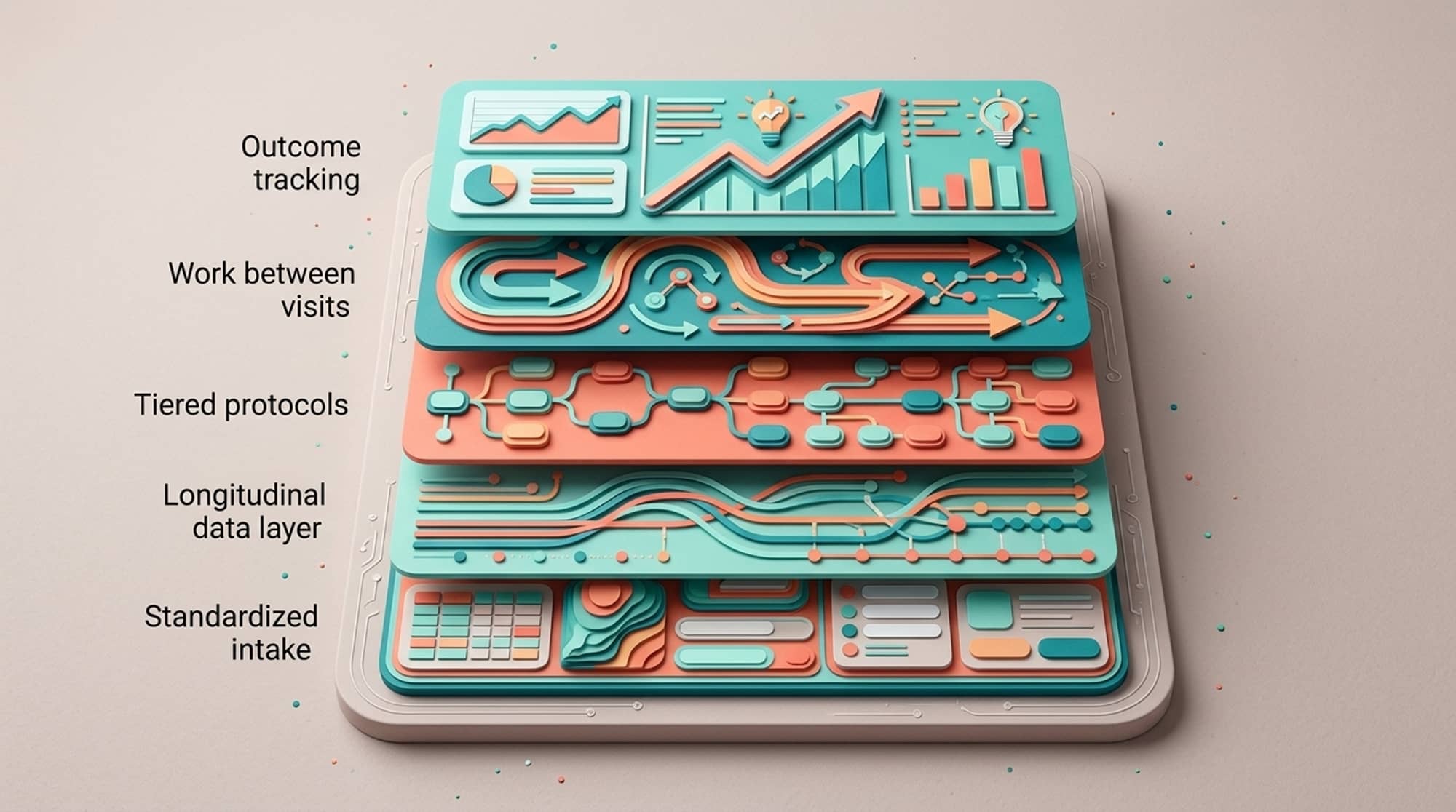

Clinical Infrastructure: The Four Pillars That Transform Data Into Care

Consider the same scenario with a platform architected around physician oversight. The difference isn’t incremental, it’s foundational. Imagine Geoffrey Fowler looking to use AI for longevity health, this time with the four integrated pillars that platforms like Longevitix dedicate their patient flow to which creates a closed loop of continuous care.

Data Aggregation and Unification: From Fragmentation to Clarity

A patient connects their Apple Watch through Longevitix. The wearable data flows into the Clinical Clarity Engine, which unifies information from labs (standard panels and specialty testing for genetics, hormones, microbiome), wearables (heart rate, HRV, sleep, activity, recovery), patient inputs (symptoms, dietary logs, adherence), and clinical assessments.

The platform transforms fragmented multi-source data into longitudinal trajectories. It identifies patterns across multi-modal streams that would be invisible when viewing sources separately. Most critically, it surfaces what matters while filtering noise.

The platform identifies that elevated resting heart rate correlates with poor sleep recovery, which coincides with rising inflammation markers (hs-CRP up 40%, homocysteine trending upward). It notes declining HRV over the past month and reduced deep sleep. This multi-system pattern gets flagged for clinical review as an integrated insight rather than disconnected metrics.

Clinical Interpretation and Personalized Intervention Plans

The flagged pattern surfaces to the physician through the clinical dashboard. The doctor sees full context: this patient recently started exercising after six months of inactivity, cortisol testing shows evening elevation, and training logs reveal consecutive high-intensity sessions without recovery days.

The physician recognizes overtraining syndrome with inadequate recovery which is a pattern they’ve seen dozens of times. The diagnosis comes from clinical judgment informed by comprehensive data the AI organized effectively.

Protocol Automation becomes relevant here. Rather than creating a custom plan from scratch, the physician selects from evidence-based preventive care protocols validated for this presentation. The selected protocol includes reducing training frequency while monitoring HRV, implementing sleep optimization (magnesium glycinate, blackout environment, consistent schedule), evening cortisol management (adaptogens, stress reduction, earlier training), and anti-inflammatory support.

The platform personalizes this protocol based on patient data: adjusting supplement dosing for body weight and lab values, scheduling retesting at appropriate intervals (HRV daily, inflammatory markers in six weeks, cortisol in eight weeks), and establishing clear milestones.

If HRV doesn’t improve within expected timeframes, the platform flags the patient as a non-responder, prompting clinical reassessment. This scales personalization without scaling physician workload.

Real-Time Communication and Accountability: Closing the Implementation Gap

Here’s where preventive care typically fails: the gap between recommendation and implementation. Patients leave with plans they don’t follow. Interventions that would work often get abandoned.

Patient engagement and adherence automation addresses this directly. Three days into the protocol, wearable data shows the patient trained at high intensity despite the recovery plan. The platform sends a reminder explaining why this matters for their specific cortisol and inflammation patterns.

A week in, sleep data collected from wearables and integrated into the platform shows improved consistency. The platform recognizes this win and sends reinforcement, showing the correlation between better sleep habits and HRV trending upward.

The patient journey feature creates transparency about what happens next: upcoming retests, expected milestones, when to schedule follow-up. When six-week retesting shows inflammatory markers decreased (hs-CRP down 30%), the platform correlates these improvements with wearable trends and adherence data. The patient sees the direct relationship between following the protocol and achieving measurable improvements and the physician is there with all of the relevant information, keeping the patient accountable and in check.

Education: Contextual Knowledge That Empowers Understanding

With the accelerating pace of research being published, we help physicians navigate through the noise by providing immersive education from curated articles integrated into the medical flow. When the patient receives their diagnosis and protocol, physicians get linked to articles explaining cortisol-inflammation relationships, why HRV matters for recovery, how sleep affects metabolic health, and evidence for their specific interventions and allows them to use this as needed with the patient.

As the journey progresses, new content surfaces at appropriate times: understanding lab results, interpreting wearable trends, recognizing when to modify their approach. This transforms patients from passive recipients to informed, involved participants.

Safeguards That Prevent the ChatGPT Problem

Notice what didn’t happen in this flow: no AI hallucinations, no inconsistent assessments, no forgotten patient information, no recommendations contradicting clinical evidence.

This reliability comes from the Multi-Layer Clinical Safeguard (MLCS)™:

Clinical validation gates mean every protocol has been reviewed by physicians specializing in preventive medicine. The AI cannot suggest interventions that haven’t gone through validation.

Bias detection runs continuously, monitoring whether the platform performs differently across demographic groups. If the system identifies overtraining more readily in men than women with similar presentations, that bias gets flagged and corrected.

Real-time guardrails prevent operating outside safe parameters. If wearable data suggests concerning acute change (sudden HRV drop with resting heart rate spike), the platform immediately alerts the care team.

Continuous monitoring tracks real-world outcomes to catch drift or unintended consequences. When patients follow protocols, the platform tracks whether they improve. If a subset doesn’t respond as expected, that triggers protocol review.

Technology amplifies clinical judgment without replacing it. The platform identifies patterns needing attention. The physician determines meaning. The protocol library provides evidence-based options. Automation ensures consistent implementation. Monitoring confirms effectiveness. At no point does the AI make stand alone medical decisions.

Deterministic Grounding: Why Medical AI Must Be Reproducible

The ChatGPT Health experiment proved to be fundamentally incompatible with medical practice due to inconsistency. Clinical decisions require reproducibility. When a physician orders the same lab test twice under identical conditions, they expect the same result. Large language models are probabilistic by nature, sampling from probability distributions rather than executing deterministic calculations.

The solution requires deterministic grounding mechanisms

At Longevitix, we layer deterministic models underneath probabilistic LLM outputs. When the system analyzes patient data, it first runs through validation logic that operates deterministically: the same biomarker values trigger the same flags every time, protocol selection follows reproducible decision trees, and risk stratification applies consistent thresholds across all patients.

The LLM layer then assists with synthesis and communication, but the underlying medical logic remains deterministic. If a patient’s hs-CRP is 4.2 mg/L and their HRV shows a specific decline pattern, that combination triggers the same clinical flags and protocol recommendations every single time.

This architecture ensures consistency: input the same data with the same question, receive the same answer. Not approximately similar, identical. This reproducibility is non-negotiable for medical applications.

Consumer AI chatbots cannot provide this deterministic foundation because they’re built purely on probabilistic language models. There’s no underlying clinical logic layer ensuring consistent medical reasoning.

Longitudinal Trajectories Reveal What Snapshots Miss

In Fowler’s case, ChatGPT compressed a decade of data into a single letter grade. This represents a fundamental misunderstanding of how preventive medicine works. What matters isn’t whether your average resting heart rate qualifies as “good” or “bad.” What matters is trajectory.

Is your HRV trending downward over six months despite maintaining similar activity? That signals something changed. Perhaps that change is cumulative stress, sleep degradation, or early metabolic dysfunction not yet visible in standard labs.

Are sleep patterns deteriorating in ways that correlate with other changes? Maybe deep sleep dropped when you started that new medication, or fragmentation increased after a stressful project began three months ago.

Is your recovery time from exercise getting consistently longer? This could indicate inadequate nutrition, training stress, hormonal imbalance, or thyroid dysfunction that hasn’t progressed enough to flag on standard testing.

These trajectories only reveal themselves through continuous monitoring interpreted by clinicians who understand underlying biology. A physician working with the Longevitix sees patterns contextualized alongside lab trends, intervention timing, life events, and medication changes.

An AI chatbot examining a data dump sees numbers without narrative. It can’t tell you that your HRV decline started two weeks after you began that sleep medication, or that your resting heart rate correlates with thyroid antibody progression.

Human Accountability Drives Outcomes More Than Perfect Algorithms

Here’s what ultimately determines success: sustained human accountability. A congestive heart failure program using AI-driven platforms cut 30-day readmissions by 50%. The AI didn’t replace nursing staff, instead it identified which patients needed outreach and why, allowing care teams to focus efforts where human intervention would have maximum impact.

Wearable data reveals patterns;clinical protocols suggest interventions. Patients receive clear guidance. None of that guarantees they’ll actually do it, or keep doing it when motivation wanes.

Sustained behavior change requires ongoing human support. The real-time communication pillar maintains continuous connection: checking adherence through objective data, sending contextual reminders tied to protocol steps, recognizing progress when metrics improve, and flagging concerning patterns needing attention.

This accountability loop transforms recommendations into sustained behavior change. The difference between knowing what to do and actually doing it consistently comes down to whether someone is supporting you through the process.

Infrastructure Requirements for Prevention-First Healthcare at Scale

The market is moving decisively toward prevention-first healthcare. Health insurers are committing billions to value-based care. Employers want programs keeping workforces healthy. This creates opportunity for practices with proper clinical infrastructure.

Generic consumer health apps cannot meet requirements that payers and employers have. They lack clinical validation, physician oversight, and demonstrated population-level outcomes. Provider-led platforms with clinical architecture can meet these requirements.

The outcome analytics capability transforms individual patient care into demonstrable population health metrics. When a practice works with 500 patients through employer partnerships, the platform aggregates results: percentage improving inflammatory markers, average changes in metabolic health scores, cardiovascular risk reduction, sleep quality improvements. It tracks intervention effectiveness and generates reports formatted for different audiences.

This infrastructure scales preventive medicine from boutique practices serving dozens to programs serving thousands without sacrificing personalization or clinical rigor.

Trust Through Transparency: Why Architecture Determines Adoption

Trust in healthcare AI is fragile, and the ChatGPT Health incident damages it further. This erosion matters because we genuinely need AI in healthcare – the complexity of modern preventive medicine exceeds human cognitive capacity without technological assistance.

A single patient might have 50 to 100 biomarkers from specialty labs, continuous streams from multiple wearables, genetic variants affecting dozens of pathways, and longitudinal symptom tracking. Synthesizing this while seeing 15 to 20 patients daily exceeds what any clinician can do consistently without decision support.

The solution isn’t rejecting AI, but rather building AI that deserves trust. That requires transparency about what the system can and cannot do, clear documentation of how clinical decisions get made, physician oversight remaining visible and central, and honest acknowledgment of limitations.

When patients ask about wearable data in Longevitix, they see AI-identified patterns alongside physician interpretation. They understand the technology is a tool their doctor uses, not a replacement for medical judgment. They can access evidence bases for interventions and track whether they’re working.

Two Fundamentally Different Paths for Wearable Health Data

Wearable health data represents one of the most promising developments in preventive medicine. Continuous monitoring provides unprecedented visibility into how interventions affect real-world physiology.

That promise only materializes with clinical frameworks maintaining medical oversight while leveraging continuous monitoring capabilities.

The ChatGPT Health experiment demonstrates what happens without that framework. Sophisticated AI analyzing comprehensive data produces unreliable assessments that physicians dismiss. The data remains noise instead of signal. Worse, it creates false confidence or unnecessary alarm.

The alternative requires clinical infrastructure that treats wearables as one valuable input within comprehensive assessment, interprets patterns within multi-system biological context, implements evidence-based interventions through validated protocols, maintains physician oversight at every decision point, and demonstrates measurable outcomes through systematic tracking.

This isn’t theoretical. It’s how functional and integrative medicine practices already operate when they have the right operational infrastructure. The technology amplifies their ability to deliver personalized preventive care to larger populations without sacrificing quality.

The Architecture That Makes Wearable Data Clinically Meaningful

The future of wearable health data will be determined by whether we integrate continuous monitoring into proper clinical frameworks that maintain physician oversight while leveraging unique capabilities this data provides.

Consumer AI chatbots cannot fulfill this role. They lack clinical validation, systematic safeguards, physician oversight, and operational infrastructure that preventive medicine requires.

The alternative requires purpose-built clinical infrastructure: platforms that unify data into longitudinal patient views, implement validated protocols with evidence-based interventions, maintain physician decision-making authority at every clinical choice point, coordinate continuous care through sustained engagement and accountability, and demonstrate measurable population-level outcomes.

This infrastructure enables what ChatGPT Health cannot: transforming health tracking into health improvement through the combination of technology that organizes complexity and clinical wisdom that interprets meaning. The platform surfaces patterns that matter. Physicians determine what those patterns mean. Evidence-based protocols guide interventions. Automation ensures consistent implementation. Monitoring confirms effectiveness. Accountability keeps patients engaged.

Your Apple Watch data doesn’t need ChatGPT’s inconsistent assessments. It needs integration into clinical practice by physicians who understand how to interpret continuous monitoring within comprehensive health assessment.

That’s what Longevitix provides: the infrastructure that makes wearable data clinically meaningful, preventive medicine operationally feasible at scale, and physician expertise more effective at improving population health.