Beyond the Algorithm: Why AI’s Greatest Value in Longevity Medicine is What It Doesn’t Do

The Promise We Keep Hearing (And Why It’s Incomplete)

Walk into any health tech conference today and you’ll hear the same refrain: AI is revolutionizing healthcare. It diagnoses diseases earlier, predicts outcomes more accurately, personalizes treatment plans with precision.

All true. All impressive. All missing the point.

Because here’s what nobody talks about: the real revolution isn’t what AI can do. It’s what it can stop doing to us.

I’ve spent years watching technology promise transformation while delivering overwhelm. I learned to ask a different question: Does this tool amplify the signal we need to hear, or does it just add to the noise already drowning us?

In longevity medicine, where we try to identify subtle patterns across dozens of biomarkers, intervene before disease manifests, and personalize protocols for individual biology, this distinction matters. It’s everything.

The Noise Crisis Nobody Wants to Acknowledge

Robert Wachter’s The Digital Doctor chronicles what happened when we digitized healthcare without understanding the downstream effects. We traded paper charts for EHRs and called it progress. What we got was alert fatigue, documentation burden, and physicians spending more time staring at screens than looking at patients.

The average clinician now faces:

- 4,000+ data points per patient in a typical EHR

- 63 alerts per day (most irrelevant to clinical decisions)

- 2+ hours of documentation for every hour of patient care

- Biomarker panels that generate hundreds of values without context

This isn’t a data problem. It’s a noise problem.

And here’s the uncomfortable truth: most AI in healthcare makes it worse.

We build systems that generate more dashboards, more metrics, more “insights” without asking whether any of it helps a clinician make a better decision at 4 PM on a Thursday when they’re seeing their 18th patient of the day.

Eric Topol puts it bluntly in Deep Medicine: “We have created a system where doctors are doing data entry instead of doctoring.” AI was supposed to fix this. Instead, we’ve automated the generation of more noise.

What Signal Means in Clinical Practice

Let’s ground this in reality.

A 52-year-old patient comes in with complaints of fatigue and brain fog. Standard labs are unremarkable. In traditional medicine, this is where the conversation ends or spirals into expensive, unfocused testing.

In longevity medicine, you look at:

- Metabolic markers (insulin, glucose variability, lactate)

- Thyroid function (not just TSH, but T3, reverse T3, antibodies)

- Inflammatory markers (hs-CRP, homocysteine, oxidized LDL)

- Hormonal status (cortisol patterns, sex hormones, DHEA)

- Nutritional markers (methylation status, micronutrients)

- Sleep architecture from wearables

- Activity patterns and HRV trends

That’s not 4,000 data points. But it’s 50 to 100 values that need synthesis, contextualization, and translation into actionable protocols.

The signal is the pattern that explains why this specific patient feels terrible despite “normal” labs. Perhaps borderline insulin resistance combined with subclinical hypothyroidism and poor sleep recovery creates multi-system dysfunction that standard testing missed. Looking deeply into trends helps physicians understand patterns and trajectories of where things are headed and taking that into account as part of the care plan.

The noise is everything that obscures that pattern: irrelevant reference ranges, false positive alerts, disconnected data sources, algorithmic outputs that lack clinical context, and AI-generated recommendations that sound sophisticated but ignore the patient’s actual phenotype.

Where Most Healthcare AI Fails

Peter Lee, Carey Goldberg, and Isaac Kohane’s The AI Revolution in Medicine explores the promise of GPT-4 and similar models in healthcare. The capabilities are impressive: natural language processing of clinical notes, automated summarization of literature, even diagnostic suggestions based on symptom patterns.

But they also document the failure modes.

Hallucinations in High-Stakes Contexts

Large language models generate plausible-sounding medical information that’s wrong. In longevity medicine, where we work in areas without clear evidence consensus, this creates catastrophic risk.

Bias Amplification

AI trained on historical data reproduces historical inequities. Women’s symptoms get minimized. Certain populations get undertreated. Reference ranges that don’t account for genetic diversity get applied universally.

Context Blindness

An algorithm sees a TSH of 3.2 and calls it normal. A physician sees that same TSH in a patient with symptoms, family history, and rising thyroid antibodies, and they don’t overlook T3 and T4 for insight into the whole picture. They recognize early Hashimoto’s that needs intervention.

The Dangerous Middle Ground

The AI sounds authoritative but can’t replace clinical judgment. This is where errors happen. Physicians either over-trust algorithmic outputs or become so overwhelmed by contradictory AI suggestions that they ignore valuable signals.

The American Hospital Association’s recent letter to the FDA on AI-enabled medical devices (December 2025) underscores these concerns. They call for stronger oversight frameworks to ensure AI tools meet rigorous safety and effectiveness standards before clinical deployment.

This isn’t a technology problem. It’s an implementation philosophy problem.

Most healthcare AI gets built to add capabilities: more analysis, more predictions, more recommendations.

What we need is AI that reduces cognitive load while maintaining (or improving) decision quality.

There’s a fundamental difference.

The Alignment Problem in Healthcare

Brian Christian’s The Alignment Problem explores a critical challenge in AI development: ensuring that machine learning systems align with human values and intent. In healthcare, this isn’t theoretical philosophy. It’s patient safety.

Consider what “alignment” means in longevity medicine:

Clinical alignment: Does the AI recommendation advance the patient’s health goals, or does it optimize for easily measurable metrics that don’t matter?

Ethical alignment: Is the system fair across different populations, or does it favor certain groups while disadvantaging others?

Operational alignment: Does it integrate into real clinical workflows, or does it demand that physicians adapt their practice to serve the algorithm?

Value alignment: Does it support the kind of medicine we want to practice (personalized, preventative, focused on root causes), or does it push us back toward reactive, protocol-driven care?

When AI systems lack proper alignment, they don’t just fail. They fail in ways that erode trust, perpetuate inequity, and increase the noise-to-signal ratio in clinical decision-making.

A biased algorithm that systematically undertreats certain populations isn’t providing signal. It’s generating noise that threatens the entire foundation of equitable care.

An AI that produces impressive-sounding recommendations without understanding clinical context isn’t helpful. It’s dangerous.

A Different Approach: Signal Amplification, Not Feature Addition

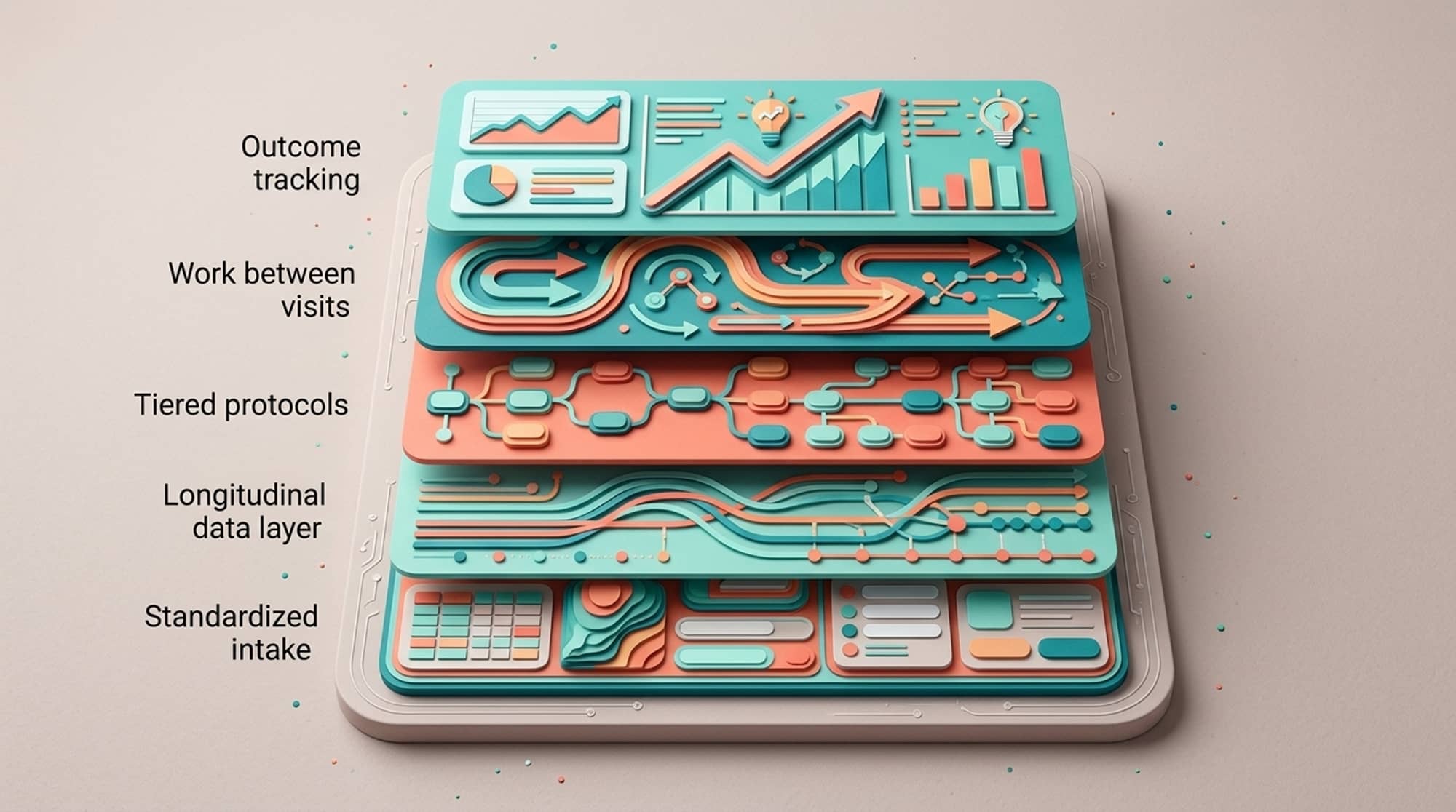

At Longevitix, we started with a different question: What if AI’s primary job isn’t to add more intelligence to healthcare, but to remove more noise?

This led to our Clinical Clarity Engine. It’s a different architecture than most healthcare AI systems.

Instead of building an AI that generates more recommendations, we built one that does three things:

Filters Aggressively

Most biomarkers don’t need action most of the time. Our system identifies what matters for this patient’s current state and goals. It suppresses everything else. Less information. Better decisions.

Contextualizes Relentlessly

A glucose value isn’t just a number. It exists in the context of insulin levels, activity patterns, meal timing, stress biomarkers, and sleep quality. Our AI understands these relationships and presents integrated patterns, not isolated data points.

Protects Against Errors Systematically

This is where our Multi-Layer Clinical Safeguard (MLCS)™ comes in. Accurate algorithms aren’t enough. You need architectural safeguards against the failure modes we know exist:

Layer 1: Training data curation. We don’t throw data at models. We ensure training sets are validated, diverse, and represent real-world longevity medicine populations, not just whatever data was easiest to obtain.

Layer 2: Algorithmic bias detection. Active monitoring for performance disparities across demographic groups. If the system performs differently for different populations, that’s noise we eliminate.

Layer 3: Clinical validation gates. Physician oversight on protocols and recommendations before they reach clinical use. AI can suggest. Only clinicians can approve for implementation.

Layer 4: Real-time guardrails. Built-in constraints that prevent hallucinations, flag out-of-bounds recommendations, and require human verification for high-stakes decisions.

Layer 5: Continuous monitoring. Ongoing assessment of real-world outcomes to catch drift, errors, or unintended consequences that emerge over time.

This isn’t sexy. It doesn’t make for exciting demos. But it’s how you build AI that works in clinical practice, not just in PowerPoint presentations.

What This Looks Like in Practice

Let’s return to that 52-year-old patient with fatigue and brain fog.

Without Proper AI (or With Typical Healthcare AI)

The physician faces dozens of lab values, multiple wearable data streams, contradictory online symptom checkers the patient consulted, and an EHR alerting about a dozen “abnormal” values that are within functional ranges. They order more tests, refer to specialists, or (most common) tell the patient everything looks fine and suggest stress management.

With Signal-Amplifying AI

The system has already:

- Identified the three most relevant patterns across metabolic, thyroid, and sleep data

- Suppressed the noise from values that are stable and unremarkable

- Contextualized the “borderline” findings within this patient’s historical trends

- Cross-referenced against similar patient phenotypes to predict intervention responses

- Flagged the interaction between poor sleep architecture and insulin sensitivity that standard analysis would miss

The physician sees a clear picture: multi-system suboptimal function with a probable intervention sequence. The technology didn’t tell them what to do. It removed 90% of the cognitive noise so they could think clearly about the 10% that matters.

This is what Topol means when he talks about AI making healthcare “human again.” Not by replacing clinical judgment, but by creating the space for it to function properly.

The Trust Equation

Here’s what keeps me awake: the erosion of trust in healthcare AI.

When AI systems make errors that surprise clinicians, trust decreases.

When algorithms produce recommendations that ignore obvious clinical context, trust decreases.

When “black box” systems generate outputs without explainable reasoning, trust decreases.

When bias in AI perpetuates health inequities, trust doesn’t just decrease. It shatters.

Once clinicians stop trusting the technology, one of two things happens:

- They ignore it (wasting the investment and opportunity)

- They over-rely on it because they’re too overwhelmed to evaluate it critically (creating dangerous vulnerabilities)

Neither is acceptable in longevity medicine, where we often operate in areas without strong evidence consensus and where individual variation is enormous.

This is why physician oversight isn’t optional in our system. It’s architectural. The MLCS™ framework ensures that clinical wisdom remains the final arbiter of patient care decisions. AI provides clarity. Physicians provide judgment.

The Road Ahead: Signal Over Sophistication

The next wave of healthcare AI faces a choice:

Path 1: Continue building systems that analyze more data, generate more insights, and require physicians to become data scientists to use them. This path leads to more impressive technology and worse clinical outcomes.

Path 2: Build systems that prioritize signal over noise, that understand their job is to amplify clinical wisdom rather than replace it, and that recognize the limitations of algorithmic decision-making in complex biological systems.

At Longevitix, we chose Path 2.

Not because it’s easier. It’s harder. It requires deeper medical expertise to know what to filter out, more sophisticated engineering to build proper safeguards, and the discipline to resist adding features that look impressive but increase cognitive load.

But it’s the only path that scales personalized, predictive longevity medicine to the clinicians who need it and the patients who would benefit from it.

The Bottom Line

AI in longevity medicine should be invisible when it works well.

The physician shouldn’t think: “This AI is impressive.”

They should think: “I can finally see clearly what’s happening with this patient.”

That’s the signal we’re after. Everything else is noise.

And in medicine, noise doesn’t just waste time. It costs lives.

Frequently Asked Questions

How is AI in longevity medicine different from AI in traditional healthcare?

Traditional healthcare AI focuses on diagnosing existing disease: identifying tumors in imaging, flagging abnormal lab values, or predicting acute events like sepsis. Longevity medicine AI operates in a different paradigm. It identifies subtle multi-system patterns before they manifest as disease.

This requires AI that can contextualize “borderline” values that traditional systems would ignore, recognize patterns across metabolic, hormonal, inflammatory, and lifestyle data simultaneously, account for enormous individual variation in what “optimal” looks like, and support preventative interventions rather than reactive treatment.

The challenge isn’t just technical sophistication. It’s clinical sophistication. Longevity medicine AI needs deep understanding of functional medicine principles, not just pattern matching against disease databases. This is why physician oversight and clinical validation are essential components of any legitimate longevity medicine AI platform.

What are AI hallucinations in healthcare, and why are they dangerous?

AI hallucinations occur when large language models generate confident, plausible-sounding information that’s wrong. In healthcare, this looks like citing non-existent research studies to support a recommendation, inventing drug interactions that don’t exist (or missing ones that do), generating treatment protocols that sound reasonable but contradict established evidence, or providing dosing recommendations that are dangerous.

The danger isn’t that the AI is obviously wrong. It’s that it sounds authoritative enough to be trusted without verification. In longevity medicine, where many practitioners already operate outside conventional guidelines and evidence is often limited, hallucinations can lead clinicians down dangerous paths.

This is why systems like Longevitix’s Clinical Clarity Engine include specific safeguards against hallucinations: constraining AI outputs to validated clinical protocols, requiring physician approval for new recommendations, and building real-time guardrails that flag out-of-bounds suggestions before they reach clinical use.

How do you prevent AI bias in personalized medicine?

AI bias in healthcare occurs when systems perform differently (usually worse) for certain demographic groups. This happens because of training data that over-represents some populations and under-represents others. In personalized medicine, this is particularly insidious because the entire premise is individualization.

Preventing bias requires multiple interventions. First, training data curation: ensuring datasets represent diverse populations across age, sex, ethnicity, and genetic backgrounds. This isn’t just about volume. It’s about deliberate inclusion of underrepresented groups.

Second, performance monitoring: testing whether the AI performs equally well across different demographic groups. If a system accurately predicts metabolic dysfunction in men but systematically misses it in women, that’s bias that needs correction.

Third, reference range adjustment: understanding that “normal” varies by population. A system trained primarily on European genetic backgrounds will misinterpret values in Asian or African populations where different reference ranges apply.

Fourth, clinical validation with diverse practitioners: ensuring physician oversight includes clinicians who treat diverse patient populations and can identify when AI recommendations don’t translate across different groups.

The Multi-Layer Clinical Safeguard approach includes bias detection as Layer 2, not as an afterthought, but as a core architectural requirement.

Can AI replace clinical judgment in preventative care?

No. Any company claiming otherwise is selling snake oil.

Here’s why AI cannot replace clinical judgment in preventative care. Context is everything. A patient’s lab values exist within the context of their symptoms, genetic background, lifestyle, goals, and previous interventions. AI can identify patterns in data. Only clinicians can integrate that pattern with the full human context.

Biological systems are non-linear. What works for 70% of patients is wrong for this specific patient. Clinical experience teaches pattern recognition that goes beyond what any current AI can capture.

Evidence gaps are enormous. In longevity medicine, we often operate in areas without RCT evidence. Clinical judgment bridges the gap between what we know and what we need to decide.

Values and preferences matter. Some patients want aggressive optimization. Others want minimal intervention. Some prioritize lifespan. Others prioritize current quality of life. These are human decisions, not algorithmic ones.

What AI should do is amplify clinical judgment by reducing cognitive load through intelligent filtering, highlighting patterns a clinician might miss in complex multi-system data, providing decision support grounded in evidence and similar patient outcomes, and handling administrative burden so clinicians can focus on thinking.

The goal isn’t replacement. It’s augmentation.

What should clinicians look for when evaluating AI tools for their practice?

When evaluating healthcare AI, ask these critical questions.

Does it reduce or increase your cognitive load? If you spend more time interpreting AI outputs than you would have spent analyzing raw data, it’s not helping. Good AI makes your job easier, not adds another layer of complexity.

Is there physician oversight in the system architecture? AI without clinical validation is dangerous. Look for platforms where physicians have reviewed and approved protocols, not just where they’re end-users of black box algorithms.

Can it explain its reasoning? “Black box” AI that can’t show why it made a recommendation is unsuitable for clinical use. You need to understand the logic to know when to trust it and when to override it.

What safeguards exist against errors? Ask specifically about training data diversity, bias detection, hallucination prevention, and continuous monitoring. If the company can’t articulate their safeguard architecture, walk away.

Does it integrate into your actual workflow? The most sophisticated AI is useless if it requires you to completely restructure your practice. Look for systems that adapt to clinical reality, not systems that demand you adapt to them.

What happens when it’s wrong? No AI is perfect. The question is: does the system fail gracefully with clear error handling, or does it fail dangerously by overconfident incorrect outputs?

Be skeptical of any AI platform that promises to “revolutionize” your practice. The best AI doesn’t feel revolutionary in use. It feels like finally having the right tool for the job.

How does AI handle the complexity of multi-system dysfunction in longevity medicine?

Multi-system dysfunction is where most healthcare AI fails. Traditional diagnostic AI looks for single-system pathology: a tumor, an infection, a specific enzyme deficiency. Longevity medicine requires understanding how metabolic, hormonal, inflammatory, and lifestyle factors interact across systems.

For example, a patient with fatigue might have borderline insulin resistance (metabolic system), subclinical hypothyroidism (endocrine system), elevated inflammatory markers (immune system), and poor sleep architecture (nervous system). Each value alone looks “borderline normal.” Together, they create significant dysfunction.

Effective AI in this context must map relationships between systems, understand how dysfunction in one area creates cascading effects in others, recognize patterns that don’t fit single-disease models, and provide integrated intervention strategies rather than system-by-system recommendations.

This requires training on functional medicine cases, not just conventional disease databases. It requires physician oversight from practitioners who understand multi-system interactions. And it requires AI architecture that prioritizes pattern integration over isolated value flagging.

The Clinical Clarity Engine at Longevitix specifically addresses this by contextualizing biomarkers within multi-system frameworks, identifying interaction patterns that single-system analysis would miss, and presenting integrated clinical pictures rather than lists of individual findings. This is how AI can actually support the complexity of longevity medicine practice rather than oversimplifying it.