Multimodal AI Model: What it is and why it’s a big deal for medicine

A chest X-ray tells you one thing. A troponin level tells you another. A 12-lead ECG adds a layer. The patient’s history fills in the gaps. But you, the physician, are the one integrating all of it in your head, under time pressure, often with incomplete information.

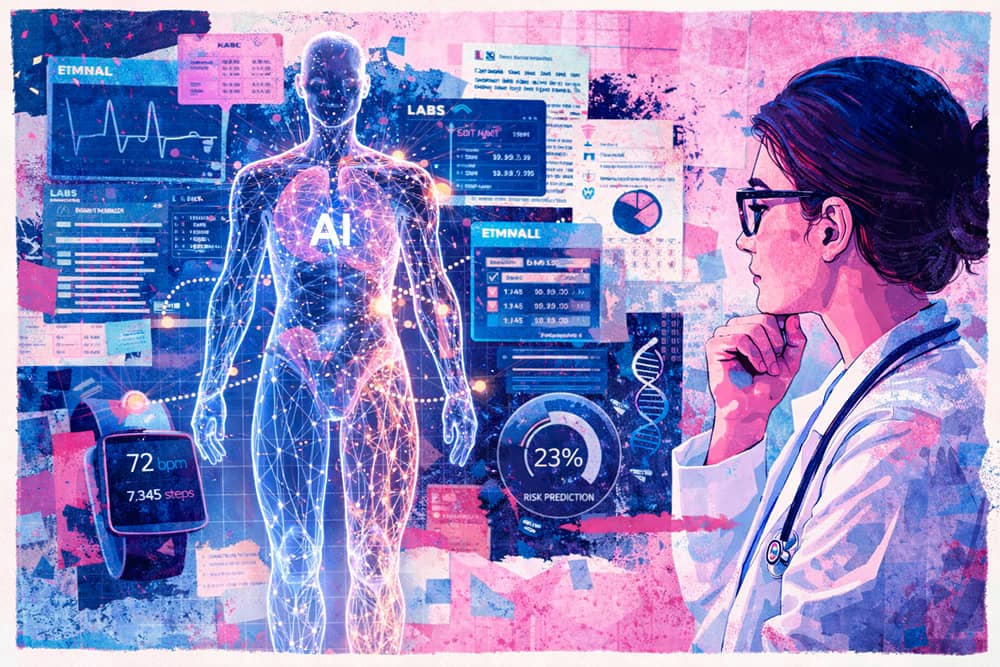

Now imagine a system that can do the same thing. One model that reads the imaging, interprets the labs, parses the waveform data, and synthesizes the clinical notes, all at once, in context, in seconds.

That’s what multimodal AI does, and it is moving faster toward the clinic than most physicians realize.

Beyond Single-Input AI: What “Multimodal” Actually Means

Most AI tools in medicine today are unimodal. They do one thing well: a radiology AI reads a chest CT. A natural language processing model summarizes a clinical note. A lab-interpretation tool flags abnormal values.

A multimodal AI model processes multiple types of data simultaneously (text, images, numerical values, audio, even genomic sequences) through a single architecture. Rather than running five separate tools across five separate workflows, a multimodal model ingests diverse data streams together and produces a unified clinical interpretation.

Google’s Med-PaLM M, for example, was among the first generalist biomedical AI systems to operate across clinical language, medical imaging, and genomics with the same set of model weights. In side-by-side evaluations, clinicians preferred Med-PaLM M–generated radiology reports over those produced by radiologists in up to 40% of cases. Google’s more recent Med-Gemini extended those capabilities to 3D imaging, generating CT reports for brain scans where more than half of the AI-generated reports matched the care recommendations a radiologist would make.

These are research-stage findings. But the trajectory is clear: multimodal AI is moving toward general-purpose clinical reasoning, not just pattern recognition in a single data type.

Why This Matters for Physicians Right Now

The clinical relevance of multimodal AI comes down to a simple reality: disease doesn’t present in a single data modality.

A patient with early-stage heart failure might show subtle changes on echocardiography, borderline BNP levels, mild fluid retention on physical exam, and declining activity on a wearable device. No single data point raises an alarm. But together, they tell a story that warrants intervention months or years before a hospitalization.

Multimodal AI is built to detect exactly these kinds of converging signals.

Dr. Eric Topol, one of the most recognized voices in medical AI, has written extensively about this shift. In his view, multimodal AI will enable physicians to “serially evaluate layers of data over the course of patients’ lives,” from DNA and RNA to anatomy, physiology, the microbiome, metabolome, and exposome, giving us “the ability to predict and forecast things in medicine at the individual level that we never had before.”

That is a fundamentally different capability than what any single-purpose AI tool offers.

Emerging Clinical Use Cases

Multimodal AI applications are already appearing across specialties:

- Radiology and imaging AI systems reading a chest CT can now incorporate patient age, lab results, and prior medical history to generate risk-adjusted interpretations rather than reading the image in isolation.

- Cardiology Models that combine ECG waveforms, cardiac imaging, biomarkers, and clinical notes can identify subclinical cardiac disease earlier than any single diagnostic alone.

- Ophthalmology. The combination of genetic data and retinal imaging through multimodal integration is enabling earlier diagnosis of retinal diseases, with implications for systemic vascular health screening.

- Preventive and longevity medicine. This is where multimodal AI arguably has its highest ceiling. Preventive care depends on synthesizing data that spans genomics, epigenetics, microbiome analysis, metabolomics, wearable biometrics, specialty labs, imaging, and longitudinal patient histories. No physician can hold all of that in working memory, and no unimodal AI tool can either.

The Data Fragmentation Problem and Why Multimodal AI Is Part of the Answer

If you practice preventive, functional, or longevity medicine today, you already know the operational bottleneck: every patient requires manual consolidation of data from eight or more distinct sources. EHRs, specialty labs, genetic testing platforms, wearable data, imaging providers, intake forms. A single comprehensive patient analysis can take five to ten hours. Some practitioners describe spending entire days building 15-page reports by hand, or needing two full days to work through a 100-page medical history.

This is the structural barrier that keeps evidence-based preventive care from scaling. The science exists to identify and reduce chronic disease risk decades before onset. But the infrastructure to apply that science efficiently across a panel of patients does not exist in most clinical workflows.

Some platforms have started addressing pieces of this problem by aggregating wearable data, labs, and device data into a unified patient view so clinicians can monitor risk and track outcomes in one place. Others have taken a similar approach with a platform trained on medical records, generating risk predictions using personalized health data and evidence-based insights or connecting patient data with personalized treatment plans.

Each of these platforms addresses a real pain point. But the challenge goes deeper than aggregation. What physicians practicing preventive medicine need is not just a dashboard that shows all the data in one place. They need a system that reasons across all of it, the way a clinician would, but at a speed and scale that a human cannot sustain across a full patient panel.

This is the gap that clinically governed multimodal AI, with organ-system-level interpretation, evidence-based intervention planning, and longitudinal tracking, is designed to fill.

At Longevitix, this is the exact problem we set out to solve. Our clinical intelligence platform unifies multi-modal patient data (genetics, epigenetics, microbiome, imaging, biometrics, EHRs, wearables, specialty labs, patient history, and more) into a single hub. AI-powered analysis turns what used to take five to ten hours of manual synthesis into minutes, with organ-system-level interpretation, risk stratification, and evidence-based intervention plans. Every recommendation traces back to published research, and every output remains fully editable by the physician.

The physician’s judgment stays at the center but the complexity of data management does not.

Clinical AI Safety: The Non-Negotiable

Any conversation about multimodal AI in medicine has to address safety. A model that processes more data types has more surface area for error, and in clinical settings, errors have consequences.

This is an area where healthcare AI still has significant ground to cover. As NIH-funded research has shown, AI models can make mistakes in clinical reasoning even when they arrive at a correct diagnosis, raising questions about interpretability and reliability.

For clinically deployed multimodal AI, safety architecture needs to go beyond “the model was right.” It needs:

- Deterministic guardrails constraining probabilistic outputs. Language models are inherently probabilistic. In clinical settings, certain outputs carry unacceptable risk. What would a deterministic guardrail actually look like in this context? Drug-drug interaction blacklists. Hard-coded contraindication checks. Dose-range limits. Building deterministic safety layers around probabilistic reasoning, so that the system cannot recommend contraindicated interventions or deviate dangerously from established care standards, is a design requirement, not a feature.

- Traceability and source attribution. Every clinical recommendation should link back to its evidence base. If a system fails, you need to know how it failed and why, and so does the physician using it.

- Physician-in-the-loop design. AI that replaces physician judgment is not the goal. AI that handles the complexity of data synthesis so physicians can focus on clinical decision-making and patient relationships: that is the goal.

The Physician’s Role in an Age of Multimodal AI

There is a legitimate concern among physicians that AI will diminish their role while the opposite is more likely true.

As Topol has argued, if physicians do not shape how AI is deployed, validated, and taught, those decisions will be made by others. The Lancet echoed this position in a 2025 editorial, writing that “clinicians must participate in the development of multimodal AI” to ensure it reflects real-world clinical needs. Physicians need to learn how to work with AI, question it, and understand its limitations. AI should give physicians “the gift of time,” without compromise on unprecedented clinical depth, not replace the clinical reasoning and patient trust that define great care.

In preventive and longevity medicine, this dynamic is especially clear. The value a physician provides is not in manually consolidating lab reports or building spreadsheets of biomarker trends. It is in interpreting those patterns through clinical experience, communicating risk to a patient in a way that motivates action, designing an intervention plan that accounts for the patient’s life and preferences, and maintaining the kind of longitudinal relationship that keeps people engaged in their own health.

The trusted relationship between physician and patient is the cornerstone of meaningful, long-term health outcomes. Technology should protect that relationship by removing the administrative and data-management burden that erodes it, not by inserting itself between doctor and patient.

Multimodal AI, deployed with the right safety architecture and clinical governance, has the potential to do exactly that.

What Physicians Should Watch For

If you are evaluating multimodal AI tools for your practice, whether you are in primary care, cardiology, preventive medicine, or any specialty that deals with complex, multi-source patient data, here are the key questions to ask:

- Does the system reason across data types, or just display them? Aggregation is table stakes. Clinical interpretation across modalities is the differentiator.

- Is every recommendation traceable to evidence? If you cannot see the source behind a recommendation, you cannot trust it, and your patients should not have to.

- Can you edit and override AI outputs? Any system that presents its conclusions as final is not designed for clinical use. The physician should always have the last word.

- Does it integrate with your existing workflow? A platform that requires you to rebuild your practice around it creates more friction than it solves. The best clinical AI layers work alongside existing EHRs and workflows, not in place of them.

- Is there a longitudinal component? Preventive care is not a one-time analysis. It is a continuous feedback loop between data, intervention, and outcome. AI tools that support ongoing monitoring, behavioral tracking, and adaptive care plans are more clinically valuable than those built for single-encounter use.

Looking Ahead: 2026 and Beyond

The pace of development in multimodal biomedical AI is accelerating. A 2025 scoping review in Medical Image Analysis found that multimodal AI research in medicine has shifted from proof-of-concept studies to task-oriented frameworks for clinical translation. A separate analysis in Frontiers in Medicine reported that multimodal approaches are now being tested across diagnostic support, medical report generation, drug discovery, and conversational clinical AI. Meanwhile, a Frontiers in AI review of precision medicine applications showed growing integration of genomics, imaging, and EHR data into unified multimodal models.

By 2026, the expectation from healthcare AI experts surveyed by Wolters Kluwer is widespread implementation, not just in large academic medical centers, but increasingly in independent practices and specialty clinics that want to deliver data-driven, personalized care. The tools are getting more accessible, the evidence base is growing, and the clinical need has never been greater.

For physicians practicing preventive and longevity medicine, multimodal AI is not a future concept. It is the infrastructure layer that will determine whether evidence-based preventive care can scale beyond a handful of high-end concierge practices and become accessible to every patient who needs it.

Frequently Asked Questions

What is a multimodal AI model in healthcare?

A multimodal AI model in healthcare is an artificial intelligence system that processes multiple types of clinical data simultaneously, including medical images, lab results, clinical notes, genomic data, waveforms like ECGs, and wearable device outputs, through a single unified architecture. Unlike traditional AI tools that analyze one data type at a time, multimodal models integrate these diverse inputs to produce comprehensive clinical interpretations.

How is multimodal AI different from traditional AI in medicine?

Traditional medical AI tools are unimodal. They perform a single task, such as reading a chest X-ray or flagging abnormal lab values. Multimodal AI combines inputs from imaging, text, lab data, genomics, wearables, and other sources into one model. This allows the system to identify patterns that emerge only when multiple data types are considered together, similar to how a physician synthesizes information from different sources during clinical reasoning.

What are the clinical applications of multimodal AI for physicians?

Current and emerging clinical applications include risk-adjusted radiology interpretation that incorporates patient history and labs, early detection of subclinical cardiac disease through combined ECG, imaging, and biomarker analysis, retinal disease screening using genetic and imaging data, and comprehensive preventive medicine where the model synthesizes genomics, epigenetics, microbiome, metabolomics, and wearable data to stratify chronic disease risk.

Is multimodal AI safe for clinical use?

Safety remains an active area of development. Clinically deployed multimodal AI requires deterministic guardrails to constrain probabilistic model outputs, full traceability and source attribution for every recommendation, physician-in-the-loop design where clinicians can edit, override, and validate all AI outputs, and rigorous testing against established standards of care. No large multimodal AI model is currently FDA-cleared for independent clinical decision-making, but research shows that physician-AI collaboration can reduce clinical error rates.

How does multimodal AI support preventive and longevity medicine?

Preventive medicine requires integrating data from EHRs, specialty labs, genetic testing, epigenetic markers, microbiome analysis, wearable biometrics, imaging, and patient histories, often eight or more data sources per patient. Multimodal AI can synthesize these inputs into unified risk assessments and evidence-based intervention plans, reducing the time required for comprehensive patient analysis from hours to minutes and enabling physicians to deliver personalized preventive care at scale.

What should physicians look for when evaluating multimodal AI platforms?

Key evaluation criteria include whether the platform reasons across data types (rather than just displaying them), whether recommendations are traceable to published evidence, whether outputs are fully editable by the physician, whether the system integrates with existing EHR workflows without requiring a full technology overhaul, and whether it supports longitudinal monitoring with continuous feedback loops between data, interventions, and patient outcomes.

Will multimodal AI replace physicians?

No. Multimodal AI is designed to handle the complexity of data synthesis and pattern recognition across large, multi-source datasets, tasks that exceed what any individual can sustain across a full patient panel. The physician’s role in clinical judgment, patient communication, care planning, and the trusted doctor-patient relationship remains central. The goal of clinical AI is to give physicians more time for the work that requires human expertise, empathy, and trust.

What multimodal AI models are currently being developed for medicine?

Notable models include Google’s Med-PaLM M and Med-Gemini, which process clinical language, medical imaging, and genomic data through unified architectures. Google’s AMIE (Articulate Medical Intelligence Explorer) focuses on diagnostic dialogue. Multiple clinical AI platforms, including those focused on preventive and longevity medicine, are building multimodal capabilities that unify EHR data, labs, genetics, wearables, and imaging into single clinical intelligence hubs.