Everyone Has AI Now. So Why Is Healthcare Still Broken?

Shortly after the commoditization of diagnostic AI through the launch announcement of OpenAI and Anthropic, **Google quietly removed its AI Overviews feature from certain health-related search queries after an investigation by ‘The Guardian’ exposed dangerous inaccuracies. The AI had provided misleading information on cancer treatment, liver test results, and women’s health screenings that could have led patients to dismiss symptoms, delay treatment, or follow harmful advice.

This wasn’t an isolated incident. The AI told pancreatic cancer patients to avoid high-fat foods, which is the exact opposite of what should be recommended and could jeopardize a patient’s chances of tolerating chemotherapy or surgery. It claimed Pap tests screen for vaginal cancer when they only check for cervical cancer. When asked about normal ranges for liver blood tests, it provided numbers without accounting for age, sex, ethnicity, or nationality.

As Vanessa Hebditch, director of communications and policy at the British Liver Trust, noted: “Our bigger concern with all this is that it is nit-picking a single search result and Google can just shut off the AI Overviews for that but it’s not tackling the bigger issue of AI Overviews for health.”

These failures reveal a fundamental truth about AI in healthcare: access to powerful algorithms doesn’t guarantee clinical value. As AI becomes commoditized, the gap between general models and clinically validated tools grows wider. The difference isn’t just technical. It’s about patient safety, regulatory compliance, and whether physicians can trust the systems they’re being asked to integrate into their practice.

AI Is Now a Commodity. Healthcare Outcomes Aren’t Moving.

In January 2026, both OpenAI and Anthropic launched healthcare-focused AI offerings. OpenAI unveiled OpenAI for Healthcare with HIPAA-compliant support and physician evaluation frameworks. Days later, Anthropic responded with Claude for Healthcare, emphasizing safety, clinical documentation, and interoperability with CMS databases, ICD-10 coding, and FHIR standards.

These launches represent significant technical achievements. They also mark a shift in the healthcare AI landscape: sophisticated language models are now widely accessible. Startups can access APIs that summarize patient charts, transcribe clinical conversations, or generate documentation. The underlying technology, often built on the same generative AI foundations, has become broadly available.

Having the Technology Doesn’t Mean You Can Use It

Healthcare differs from other industries where deploying a new feature can be measured in weeks. Physicians work within regulated workflows that include safety protocols, documentation requirements, reimbursement processes, and consent procedures. These constraints make it difficult to implement generic AI tools without careful integration and customization.

Consider the data challenge. Preventive care clinics need to integrate information from medical histories, specialty labs covering genetics, epigenetics, microbiome, and hormonal panels, cognitive and functional assessments, intake questionnaires, progress notes, imaging, and wearable devices. Without a unified view, AI models risk working with incomplete context, limiting their clinical utility.

Physicians Are Adopting Fast, Then Hitting a Wall.

Physician adoption of AI has accelerated rapidly. According to a 2024 American Medical Association survey, 66% of U.S. physicians now use AI in their practice, up from 38% in 2023, representing a 78% increase in a single year. Most usage centers on documentation and administrative tasks, with 68% of physicians using AI for clinical documentation.

Yet significant reservations remain. A 2025 survey found that 40% of physicians believe AI is overhyped and won’t meet high expectations. The concerns aren’t about the technology itself but about clinical decision-making, overreliance, and the loss of human judgment that sustained patient outcomes require.

Google’s AI Gave Cancer Patients Advice That Could Kill Them

The Google AI Overviews incident wasn’t an edge case. A broader study found that AI Overviews for health information have error rates as high as 44%. This level of inaccuracy is unacceptable in healthcare, where misinformation can delay diagnosis, worsen outcomes, or lead patients to skip necessary treatment.

Medical Context Isn’t Optional, It’s Life or Death.

Medical advice requires nuance that short AI summaries often can’t provide. Lab reference ranges vary by demographics. Treatment recommendations depend on comorbidities, medication interactions, and individual risk factors. Generic AI models, trained on massive datasets, struggle to account for the clinical context that determines whether information is helpful or harmful.

Health experts from organizations like Pancreatic Cancer UK and the British Liver Trust emphasized that medical guidance requires context beyond what a brief AI summary can deliver. Patients with serious conditions shouldn’t rely on simplified or incorrect advice when it could prevent them from receiving appropriate treatment.

Same Question, Different Answer. Every Time.

Another concern raised by medical experts was inconsistency. The same health queries produced different AI-generated answers at different times. This variability undermines trust and increases the risk that misinformation will influence health decisions. Clinical tools need to be reliable, not probabilistic by using up-to-date curated grounding databases that ensure consistency and integrate latest clinical research to those databases.

Same Model, Radically Different Risk

| General AI (Search / Chat) | Clinical AI (Validated Systems) |

|---|---|

| Single snapshot | Longitudinal patient history |

| No demographic adjustment | Age / sex / ethnicity aware |

| Probabilistic answers | Deterministic protocols |

| No liability | Regulated accountability |

| No audit trail | Full documentation & review |

Clinical Integration Isn’t a Feature. It’s the Entire Product.

For AI to improve preventive care, it must align with the daily realities of clinical practice. This means building systems that work within existing workflows, meet regulatory requirements, and produce measurable improvements in health outcomes.

Safety Isn’t a Checkbox, It’s Mission-Critical Architecture.

Clinical AI needs validation frameworks that go beyond accuracy metrics. Tools must be tested across diverse patient populations, validated against clinical outcomes, and continuously monitored for errors. As of 2025, more than 1,200 AI-enabled medical devices have received FDA clearance in the U.S., with roughly 340 of them widely used to assist in diagnosing conditions such as strokes, brain tumors, and breast cancer. These approvals reflect rigorous safety standards that general AI models don’t meet. These specialized tools went through rigorous approval processes that general-purpose AI models never face.

Safety isn’t just about algorithm performance. It includes fail-safe mechanisms, clear handoff protocols when AI flags uncertainties, and documentation trails that satisfy regulatory requirements. These safeguards are built into clinical systems from the ground up, not retrofitted after deployment.

Half of Physicians Can’t Find the Data They Need

Half of physicians surveyed in 2025 still struggle to find relevant clinical information, with only 2% saying accessing timely patient data across systems isn’t challenging. Fragmented or outdated data limits how useful AI can be in clinical care.

Effective clinical AI requires robust data infrastructure that pulls information from multiple sources, reconciles inconsistencies, and presents unified patient views. This infrastructure supports both AI-driven insights and human decision-making, ensuring that recommendations are based on complete, current information.

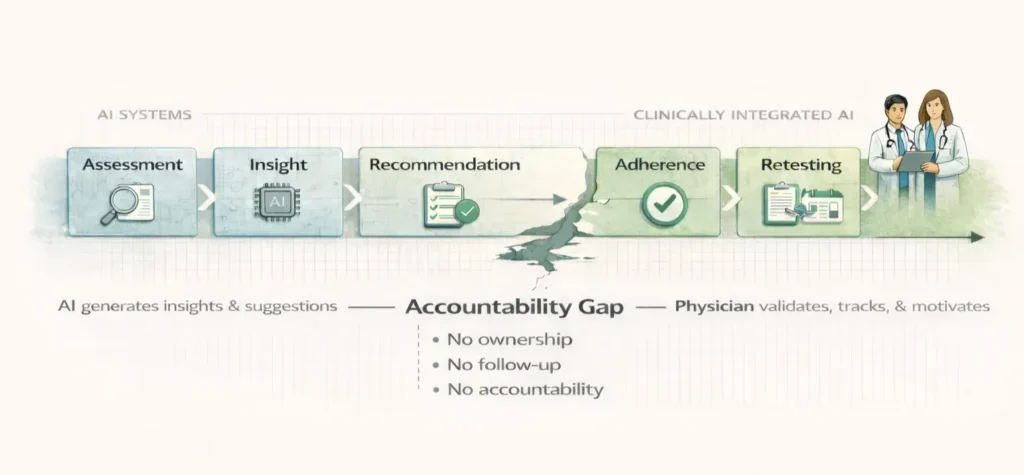

AI Can Only Hold You Accountable So Far. Humans Take You Further.

Preventive care, particularly interventions around sleep, nutrition, exercise, and supplementation, requires sustained human guidance and accountability. AI can provide insights and reminders, but only clinicians can hold patients accountable over months, adjust interventions based on retesting, and ensure evidence-based milestones are met.

Empathy Doesn’t Scale

Despite growing AI adoption, 68% of physicians in 2025 recognize at least some advantage of AI in patient care, but they don’t see it replacing human judgment. Empathy, context, and understanding a person’s life circumstances can’t be encoded in models trained on datasets. These human aspects aren’t inefficiencies to be optimized away. They’re core components of care that any AI strategy must respect and reinforce.

Recommendations Don’t Change Lives – Accountability Does.

AI-generated recommendations don’t change behavior on their own. Studies on remote patient monitoring programs for heart failure and COPD show 20 to 25% reductions in 30-day readmissions, but these outcomes depend on human outreach. At UMass Memorial Health, a congestive heart failure program using AI-driven platforms cut 30-day readmissions by 50%, but the AI didn’t replace nurse-led engagement. It identified who needed calls and why, letting care teams focus their efforts effectively.

The same pattern applies to preventive care. Patients need ongoing support to maintain lifestyle changes. AI can track progress, flag concerning trends, and suggest adjustments. Clinicians provide the accountability, motivation, and course corrections that sustain long-term results.

We Built What Physicians Actually Asked For

When we built Longevitix, we started with a simple question: What would it take for physicians to confidently integrate AI into preventive care workflows? The answer wasn’t more sophisticated algorithms. It was about building systems that addressed the actual barriers to adoption.

Pattern Recognition Isn’t Medicine

Clinical AI needs to be grounded in validated protocols, not just pattern recognition. Our platform incorporates evidence-based guidelines for interventions, ensuring that recommendations align with current medical literature. When research evolves, the system updates accordingly, maintaining clinical validity over time.

Every Clinic Is Different; Generic Tools Fail Everywhere.

No two clinics operate identically. Some focus on executive health programs, others on metabolic optimization or athletic performance. Clinical AI needs to adapt to different practice models without requiring extensive customization. Our system allows clinics to configure protocols, testing schedules, and intervention pathways to match their approach while maintaining safety guardrails.

Compliance Isn’t an Afterthought When Lawyers Get Involved

Healthcare providers face strict documentation, consent, and reporting requirements. AI tools that ignore these requirements create liability risks rather than solving problems. We built compliance into every workflow, from HIPAA-compliant data handling to audit trails that satisfy regulatory oversight.

AI Suggests, Physicians Decide. Always!

A clinical trial found that a large language model (AI) alone scored significantly higher on diagnostic reasoning accuracy than physicians using conventional resources. In this study the AI alone scored about 16 percentage points higher than physicians in diagnostic performance. Reporting on studies of medical chatbots found that AI diagnostic tool performance could be higher than that of many physicians on case vignettes (for example, 90% accuracy for AI vs about 74% for doctors in one study).

Research summarized in a medical economics article found physicians supported by AI performed better than physicians without AI and better than the AI on its own in some clinical decision tasks.

AI should augment clinical judgment, not replace it. Our platform surfaces insights and recommendations, but clinicians make the final decisions. This approach maintains accountability while leveraging AI’s ability to process large amounts of data, identify patterns, and flag areas needing attention.

Access to Algorithms Means Nothing Without This

AI in healthcare has reached an inflection point. The technology is no longer experimental. It’s becoming infrastructure. But as Google’s AI Overviews incident demonstrates, making AI broadly accessible doesn’t make it clinically safe or useful.

The difference between AI that helps and AI that harms comes down to three things: safety validation that meets clinical standards, integration with actual physician workflows, and respect for the human elements of care that technology can support but never replace.

Preventive care presents unique challenges. Unlike acute care, where interventions produce rapid results, preventive medicine requires sustained engagement over months or years. Patients need ongoing education, motivation, and accountability. Clinics need systems that coordinate specialty testing, track interventions, monitor progress, and adjust protocols based on results.

General AI models can’t handle this complexity on their own. They lack the clinical context, workflow integration, and safety protocols that preventive care demands. This isn’t a criticism of the technology. It’s recognition that healthcare requires more than algorithms.

The Competitive Advantage Just Shifted

As AI models become commoditized, the competitive advantage shifts from access to implementation. The question isn’t whether clinics use AI but whether they use AI that’s been built for their specific needs, validated against clinical outcomes, and integrated into workflows that clinicians actually follow.

For preventive care, this means systems that coordinate the entire patient journey, from initial assessment through ongoing optimization. It means tools that help clinicians deliver personalized interventions at scale while maintaining the relationships that drive behavioral change. And it means platforms that evolve with medical evidence, regulatory requirements, and clinical best practices.

The future of healthcare AI isn’t about replacing physicians. It’s about giving them the infrastructure they need to practice preventive medicine effectively, safely, and at scale. That’s the gap we’re working to fill.

What We’ve Actually Built

The commoditization of AI models creates both opportunities and risks for healthcare. While powerful algorithms are now accessible, turning them into clinically valuable tools requires deep understanding of physician workflows, regulatory requirements, and the human elements of care that technology supports but never replaces.

At Longevitix, we’re focused on building infrastructure that helps preventive care clinics deliver personalized, evidence-based interventions at scale. Our platform coordinates specialty testing, tracks patient progress, and surfaces insights that help clinicians make informed decisions. But we never lose sight of what matters most: the physician-patient relationship that drives sustained health improvement.

Frequently Asked Questions

Why did Google remove AI Overviews from health searches?

Google removed AI Overviews from certain health-related queries after a Guardian investigation found the feature was providing misleading medical information. Examples included incorrect guidance for pancreatic cancer patients, liver test ranges that ignored demographic factors, and false information about cancer screenings. Health experts warned these errors could lead people to dismiss symptoms, delay treatment, or follow harmful advice.

How quickly are physicians adopting AI in their practices?

Physician adoption of AI has accelerated dramatically. According to the American Medical Association, 66% of U.S. physicians used AI in their practice in 2024, up from 38% in 2023, representing a 78% increase in a single year. Most usage focuses on clinical documentation (68%) and administrative tasks, with physicians citing reduced administrative burden as the primary benefit.

What makes healthcare AI different from AI in other industries?

Healthcare AI must meet stricter safety, regulatory, and validation standards than AI in other sectors. Medical decisions affect patient outcomes, so tools need clinical validation across diverse populations, continuous error monitoring, and fail-safe mechanisms. Healthcare also involves complex workflows, regulatory documentation requirements, and data interoperability challenges that don’t exist in most industries. Generic AI models can’t address these requirements without significant customization.

Can AI replace physicians in preventive care?

No. While AI can process data, identify patterns, and generate recommendations, preventive care requires sustained human guidance that technology can’t replicate. Patients need accountability, motivation, and personalized coaching over months or years to maintain lifestyle changes. Clinicians provide the empathy, context, and judgment necessary to adjust interventions based on individual circumstances. AI should augment these human elements, not replace them.

What are the biggest barriers to AI adoption in healthcare?

The main barriers include data fragmentation (50% of physicians struggle to find relevant clinical information across systems), lack of interoperability between different health IT systems, steep learning curves for new tools, and concerns about accuracy in clinical decision-making. Additionally, 40% of physicians believe AI is overhyped and won’t meet high expectations, reflecting skepticism about implementation rather than the technology itself.

How can clinics ensure the AI tools they use are safe and effective?

Clinics should prioritize AI tools that have been clinically validated, meet regulatory requirements, and integrate with existing workflows. Look for platforms with FDA approval or clearance where applicable, evidence-based protocol implementation, HIPAA-compliant data handling, audit trails for regulatory oversight, and clear mechanisms for clinician oversight of AI-generated recommendations. Purpose-built healthcare platforms offer these safeguards more reliably than generic AI models retrofitted for clinical use.